How does Natural Language Processing work?

Naveen

Naveen- 0

Machine learning algorithms are great at providing excellent insights. They’re perfect for spotting patterns we didn’t observe before we were able to make predictions with them. They’re tailor-made for specific scenarios and put many algorithms to use, allowing machines to perform a lot of our jobs. It can be hard to process such large amounts of information quickly. Yet artificial intelligence is expanding rapidly as newer algorithms are fed and trained, acting out better predictions, and better predictions move forward more rapidly.

While machine learning agents really are getting better, their understanding of the language they use for processing is less well-defined. If you’re wondering about the kinds of machine learning rules that machine learning algorithms might follow, then I’m here to break it down.

What are rules?

A rule is a sequence of commands that form part of the pattern recognition model. An example of a rule would be: Suppose we’re on a roller coaster. The Roller coaster doesn’t exist in any physical form. Imagine being in a car and moving forward at full speed. Now imagine hitting a bump in your car. That would make you stop and stop again until you come to a complete stop.

There are many types of machine learning rules. They vary in how often they are followed, what might be their frequencies, how they differ from those of human agents, and many other details.

What do we mean by reading data and drawing inferences?

In a nutshell, reading data and drawing inferences refer to an agent trying to figure out its ways of recognizing a situation, from a discrete language. Such a process is easier to comprehend if you’re familiar with a language like the English language. The theory you use to approach such a problem is called semantic learning and often involves deductive thinking. A technique known as neural inference is a key part of neural learning. Neural inference combines the following techniques:

– To extract meaning from data by taking in the context

– To extract relevant knowledge about what is happening in the domain (what the grammar of the natural language is, etc.)

In particular, I have two primary techniques that I like to apply:

1. Summarize features from text:

Learn to summarize, summarize, summarize

Simple summarizing of texts into some form of an abstract structure, not that which is less important than the intended intent.

Read to understand…

The outcome of the summarizing stage is a piece of text that is now a map (or a vector) of information and experiences about the context of the text (the meaning or intent).

2. Summarize the idea of comprehension:

Accurate summaries of the target concept and an understanding of what is happening.

Read to understand…

The outcome of the summarizing stage is a map (or a vector) of the key concepts or key experiences about the context of the text (the meaning or intent).

Build a model with detailed understandings of how the relationship between words affects what they say.

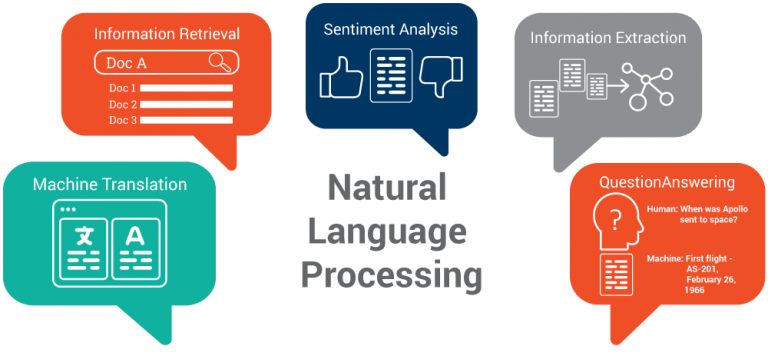

These are the two main fundamental principles of machine learning. Learning with them allows machines to produce high quality, but limited predictions about situations, without having learned how to convey meaningful content. That all changes when you include natural language processing (NLP) components in machine learning.

NLP is able to crawl deeper into a particular topic of discussion or understanding to see what is happening in a new topic as well. It’s rarely a holy grail, but given the attention to NLP is given in the context of AI at present, maybe it will someday be. NLP are extremely powerful technologies, but in the short term you can’t create something that’s that great with them. That’s because, in contrast to traditional machine learning algorithms, they’re focused on an abstract form of representation that doesn’t explain data (the interpretation). Such an understanding doesn’t come from statistics or other methods, but because NLP embeds large amounts of natural language in text.

Popular Posts

Author

-

Naveen Pandey has more than 2 years of experience in data science and machine learning. He is an experienced Machine Learning Engineer with a strong background in data analysis, natural language processing, and machine learning. Holding a Bachelor of Science in Information Technology from Sikkim Manipal University, he excels in leveraging cutting-edge technologies such as Large Language Models (LLMs), TensorFlow, PyTorch, and Hugging Face to develop innovative solutions.

View all posts