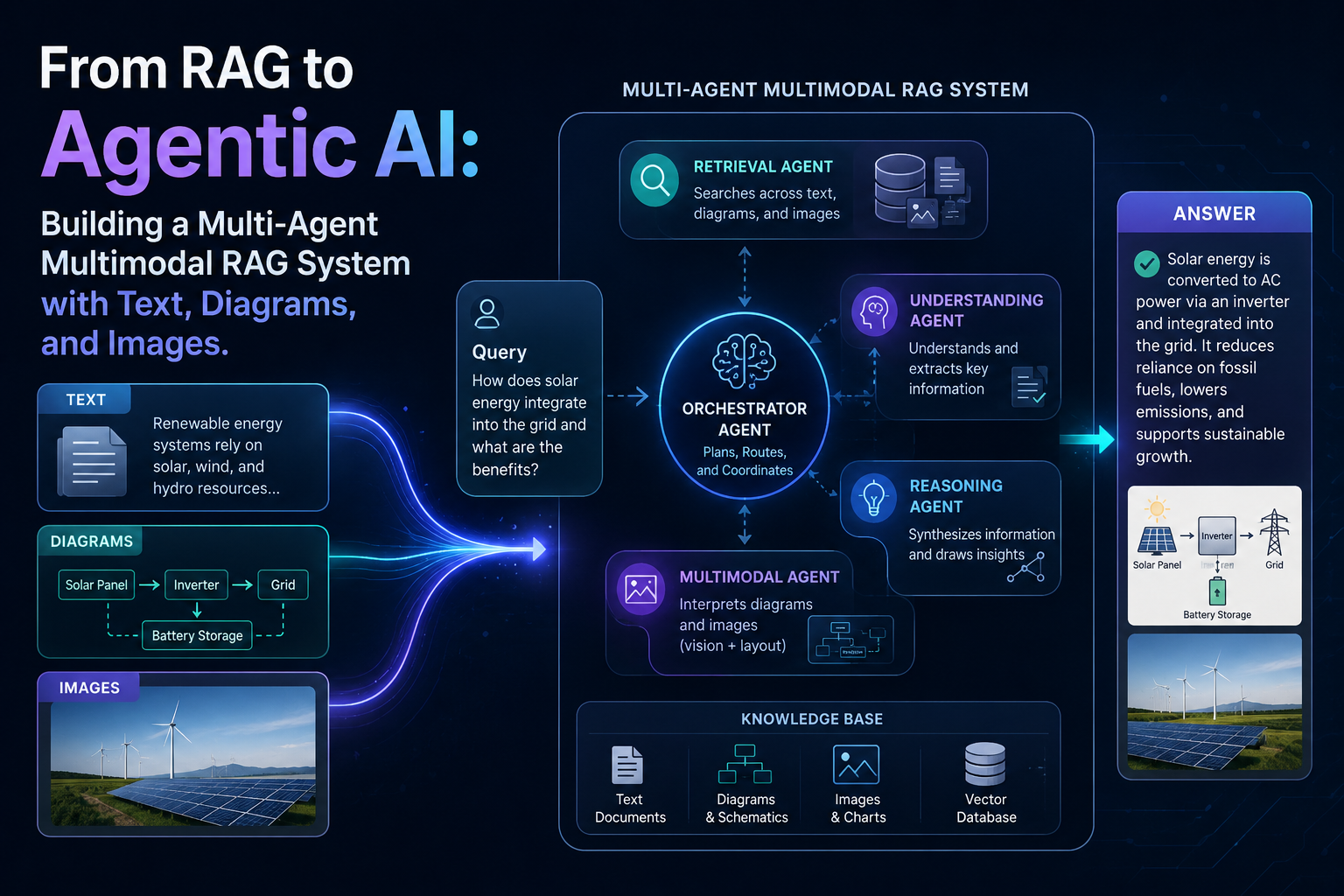

From RAG to Agentic AI: Building a Multi-Agent Multimodal RAG System with Text, Diagrams, and Images

Naveen

Naveen- 0

Traditional RAG systems retrieve information. Modern AI systems decide how to respond. That shift—from retrieval to reasoning—is what defines the next generation of intelligent systems.

In this article, we build a Multi-Agent Multimodal RAG system that not only retrieves knowledge but also decides whether to respond with text, generate a structured diagram, or produce a visual image. This system combines LLMs, vector search, and multiple generation tools into a unified pipeline, making it far closer to real-world AI applications than standard tutorials.

From practical experience building LLM systems, the biggest limitation of basic RAG pipelines is clear: they are static. They always follow the same steps regardless of the query. What we need instead is a system that can reason, choose tools, and adapt its behavior dynamically. That is exactly what we will build here.

What is Multimodal RAG and why is it not enough anymore?

Retrieval-Augmented Generation (RAG) is a technique that improves LLM responses by retrieving relevant information from external sources. Instead of relying only on pre-trained knowledge, the model uses retrieved context to generate more accurate answers.

In a multimodal setup, the system extends beyond text and incorporates other data types such as images. However, most implementations treat images as text by extracting their content using OCR or captions. While this allows the system to process visual data, the output remains limited to text.

This is where traditional multimodal RAG falls short. It can understand multiple modalities, but it cannot respond in multiple modalities. In real-world applications, users often expect not just explanations, but visual representations—diagrams, illustrations, and structured outputs that enhance understanding.

What is Agentic Multimodal RAG?

Agentic Multimodal RAG builds on top of traditional RAG by introducing decision-making and tool usage. Instead of following a fixed pipeline, the system uses agents to determine how to respond to a query.

In this architecture, the system does not assume that every query needs the same type of output. Instead, it analyzes the query and decides whether the response should include:

- only text

- a structured diagram

- a generated image

This transforms the system from a passive responder into an active problem-solving agent.

System Architecture: How everything works together

At a high level, the system follows a coordinated workflow.

When a user submits a query, the first component that activates is the tool selection agent. This agent analyzes the query and determines whether a visual output is required. If the query involves structured processes or architectures, the system generates a diagram. If the query is more conceptual, it produces an illustrative image. Otherwise, it responds with text only.

Once the decision is made, the system retrieves relevant context using a vector database. This ensures that the response is grounded in real information rather than relying solely on the model’s internal knowledge.

The retrieved context is then passed to the language model, which generates a clear and structured answer. Finally, depending on the decision made earlier, the system generates either a diagram using Mermaid or an image using a diffusion model.

Why diagrams and images require different approaches

A key insight in building this system is understanding that not all visuals are the same.

Diffusion models, such as Stable Diffusion, are excellent at generating creative and realistic images. However, they struggle with structured outputs like diagrams, flowcharts, and labeled architectures. This is because they are trained to optimize visual realism rather than logical structure.

For structured visuals, programmatic tools like Mermaid are far more reliable. They generate diagrams based on explicit instructions, ensuring accuracy and readability.

This is why the system separates visual generation into two paths: structured diagrams and conceptual images.

Step-by-Step Implementation

Now let’s build the system step by step.

Setting up the environment

!pip install -q groq sentence-transformers faiss-cpu diffusers transformers accelerate

import os

from getpass import getpass

os.environ["GROQ_API_KEY"] = getpass("Enter Groq API Key: ")

Initializing models

from groq import Groq

from sentence_transformers import SentenceTransformer

from diffusers import StableDiffusionPipeline

import torch

client = Groq()

embedding_model = SentenceTransformer("all-MiniLM-L6-v2")

pipe = StableDiffusionPipeline.from_pretrained("runwayml/stable-diffusion-v1-5")

pipe = pipe.to("cuda" if torch.cuda.is_available() else "cpu")

Creating the knowledge base

documents = [ "Transformers use self-attention mechanisms.", "RAG retrieves external knowledge before answering.", "Multi-agent systems divide tasks across agents." ] import faiss import numpy as np embeddings = embedding_model.encode(documents) index = faiss.IndexFlatL2(embeddings.shape[1]) index.add(np.array(embeddings))

Building the tool selection agent

def tool_selector_agent(query):

prompt = f"""

Classify the query:

- diagram → structured explanation

- image → conceptual visual

- none → no visual needed

Query: {query}

"""

response = client.chat.completions.create(model="openai/gpt-oss-120b",

messages=[{"role": "user", "content": prompt}]

)

return response.choices[0].message.content.lower()

Retrieval and text generation

def retriever(query):

query_embedding = embedding_model.encode([query])

_, I = index.search(np.array(query_embedding), 2)

return [documents[i] for i in I[0]]

def text_agent(query, context):

prompt = f"""

Answer clearly using the context.

Context:

{context}

Question:

{query}

"""

response = client.chat.completions.create(

model="openai/gpt-oss-120b",

messages=[{"role": "user", "content": prompt}]

)

return response.choices[0].message.content

Diagram generation (Mermaid)

from IPython.display import Markdown, display

def diagram_agent(query):

prompt = f"""

Convert this into a Mermaid diagram.

Query: {query}

"""

response = client.chat.completions.create(

model="openai/gpt-oss-120b",

messages=[{"role": "user", "content": prompt}]

)

code = response.choices[0].message.content

display(Markdown(f"```mermaid\n{code}\n```"))

Image generation (Diffusion)

def image_prompt_agent(query):

prompt = f"""

Create a clean, minimal image prompt.

No text, no labels, professional style.

Query: {query}

"""

response = client.chat.completions.create(

model="openai/gpt-oss-120b",

messages=[{"role": "user", "content": prompt}]

)

return response.choices[0].message.content

def image_agent(prompt):

image = pipe(prompt).images[0]

display(image)

Final orchestrator

def hybrid_rag_system(query):

tool = tool_selector_agent(query)

docs = retriever(query)

context = " ".join(docs)

answer = text_agent(query, context)

print("\nAnswer:\n", answer)

if "diagram" in tool:

diagram_agent(query)

elif "image" in tool:

prompt = image_prompt_agent(query)

image_agent(prompt)

Example usage

hybrid_rag_system("Explain transformer architecture")

hybrid_rag_system("Show an AI brain concept")

hybrid_rag_system("What is RAG?")

What makes this system different?

This system is fundamentally different from traditional RAG pipelines because it introduces decision-making and adaptability. Instead of treating every query the same way, it dynamically selects the appropriate response format.

It also separates visual generation into structured and creative outputs, ensuring that diagrams remain accurate while images remain visually meaningful.

Most importantly, it reflects how modern AI systems are actually built—by combining multiple specialized components rather than relying on a single model.

Conclusion

The evolution from RAG to Agentic Multimodal RAG represents a shift from static pipelines to intelligent systems. It is no longer enough for AI to retrieve and generate text. It must also decide how to present information in the most effective way.

By combining retrieval, reasoning, and multimodal generation, this system demonstrates how AI can move closer to real-world problem solving. It is not just about answering questions anymore—it is about delivering answers in the most useful form possible.

Author

-

Naveen Pandey has more than 2 years of experience in data science and machine learning. He is an experienced Machine Learning Engineer with a strong background in data analysis, natural language processing, and machine learning. Holding a Bachelor of Science in Information Technology from Sikkim Manipal University, he excels in leveraging cutting-edge technologies such as Large Language Models (LLMs), TensorFlow, PyTorch, and Hugging Face to develop innovative solutions.

View all posts